Couldn’t believe it when I received a tweet back today. Could AJA Kona 3 owners really be in luck with Avid Media Composer? It’s looking good to me!

Is the trust for Apple gone for good?

In the blink of an eye, the release of Final Cut Pro X has caused a ripple in the Matrix so huge, I’m not sure Neo could even fix this …

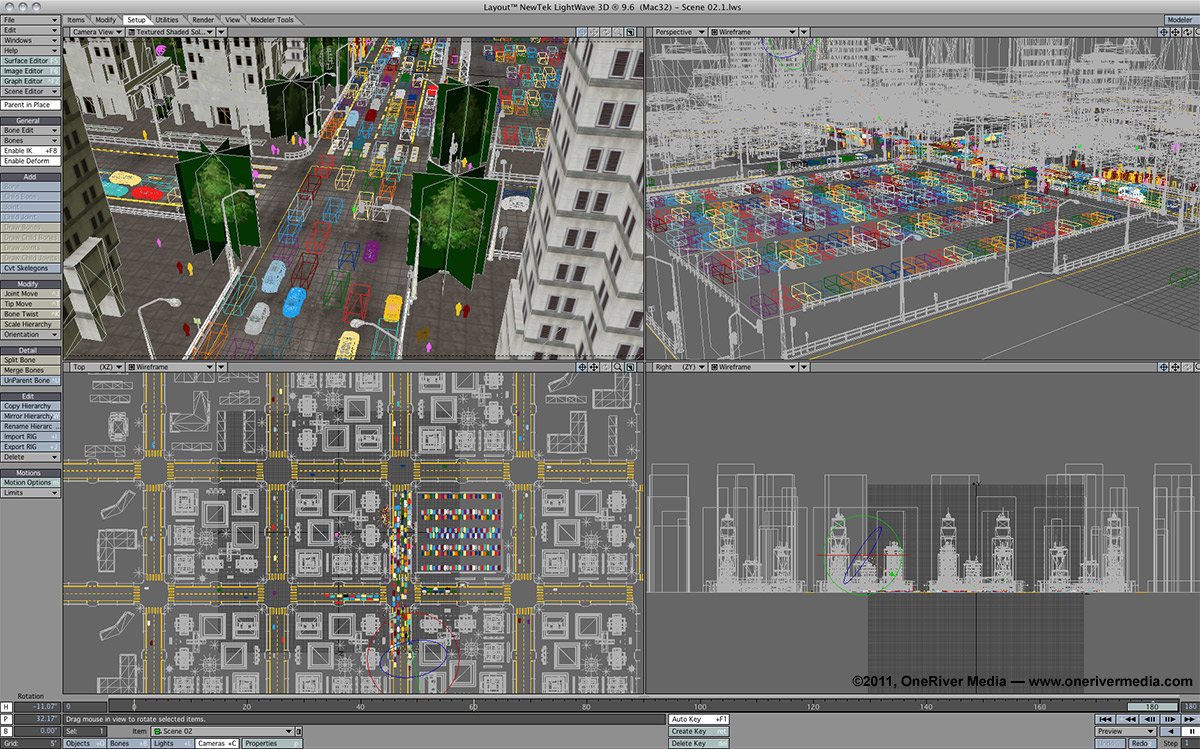

Adding 3D Modeling & Animation to your Creative Arsenal

In the ever-growing demand of clients wanting more creative options from you, their creative go-to person, you might want to seriously consider learning 3D modeling and animation. Today there are …

Final Cut Pro X: Avid’s greatest marketing success?

It’s official. Today, after 15 years of using NLEs, OneRiver Media officially purchased our first licensed seat of Avid Media Composer. This was actually a long time coming, as we’re …

Tuesday Tip #6 – QuickTime Player 10 Blows

QuickTime Player 10 (“X”) has been out for over a year now (since the release of Snow Leopard), but some people may not know they can still use QuickTime Player …

Tuesday Tip #5 – Resetting Clip Numbers on Canon DSLRs

Resetting your camera’s clip number can really make things easier on your production and the post-production process. This is especially useful when you have a crew member on set that’s …

Tuesday Tip #4 – Dynamic Audio Compression

Sadly, it seems as though audio is always on the back burner for a lot of video editors. But as I like to say, good audio WILL make your video …

Multicam HDSLR with Timecode

If you’re interested in shooting your HDSLR cameras with timecode, it’s a lot easier than you might think. This article describes the method I’ve developed and use on sets to …